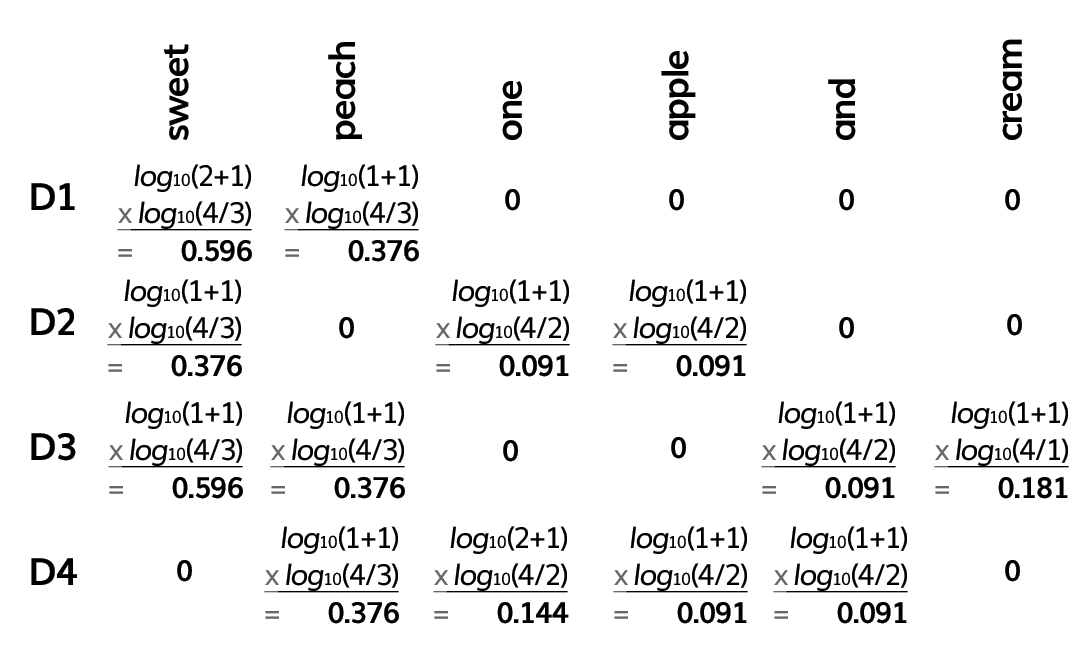

Figure A

Figure A Instructor solution

Instructor solution

Speech and Language Processing: Vector Semantics and Embeddings (pages 12-14)

Speech and Language Processing: Vector Semantics and Embeddings (pages 12-14)

Jurafsky, D., Martin, J.H.: Speech and Language Processing (3rd ed.). Retrieved from https://web.stanford.edu/~jurafsky/slp3/6.pdf (Oct 20, 2025)

You may exit out of this review and return later without penalty.

CLOSE REVIEW

CLOSE REVIEW